Duplicate, submitted URL not selected as canonical: HOW TO FIX?

What is Content Duplication in SEO?

Content duplication occurs when identical or similar content appears on multiple pages within a website or across different websites. This can confuse search engines, making it difficult to determine which version of the content to rank. While Google doesn’t penalize duplicate content outright, it prioritizes one version over others, which may impact your page's visibility in search results.

Understanding Content Duplication and SEO Challenges

For website owners and digital marketers, content duplication can be one of the most frustrating challenges in SEO. Even when everything seems fine on the surface, unexpected issues can arise, leaving you puzzled about why your page isn’t ranking as it should.

If you’ve landed here, you’re likely facing this problem. One common oversight is not submitting your URL to Google Search Console. This vital tool, often used by digital marketing agencies and website design companies, helps fix errors and optimize your site for Google Search.

Have You Submitted Your URL?

Submitting your URL to Google Search Console is necessary for every website owner. We've got you covered if you’re unsure how to do this. Check out our guide on how to submit URLs to Google search engines. This tool tracks your site’s performance and helps you evaluate whether your marketing agency delivers the desired results.

Tackling URL Duplication

Duplicate content issues often stem from similar content accessible through multiple URLs. For instance, your homepage might be reachable via:

- http://www.kabeeroptics.com

- https://www.kabeeroptics.com

- http://kabeeroptics.com/index.php

Each URL version appears as a separate page to search engines, leading to duplication. The fix? Implementing 301 redirects or using canonical tags points Google toward your preferred URL. Learn more about canonicalization and how it works to consolidate duplicate pages effectively.

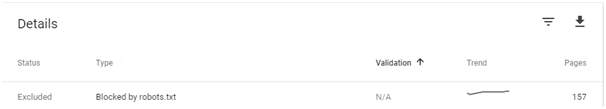

Robots.txt: Keep It Clean

A clean robots.txt file plays a crucial role in SEO. Any unnecessary blockages can trigger errors in the Google Search Console. While Google assures us that these warnings don’t directly affect rankings, we’ve observed significant improvements when they are addressed. For detailed guidance, read Google’s article on robots.txt.

Keeping your robots.txt file straightforward ensures search engines can crawl your site efficiently.

The Real Impact of Duplicate Content

You might wonder: Does duplicate content hurt my rankings? Google’s John Mueller clarified that duplicate content doesn’t inherently penalize your site. Instead, Google selects the most relevant version to display, ignoring duplicates. This process ensures your unique content gets the attention it deserves.

However, even if duplicate content doesn’t hurt rankings, it can confuse search engines. Using canonical tags and fixing your XML sitemap ensures your preferred URLs are indexed correctly.

Pro Tip: Fix Your Sitemap

An accurate sitemap is vital for SEO success. Ensure it includes all your active, canonical URLs and matches the structure in the Google Search Console. If you’re not sure how to create or optimize a sitemap, don’t hesitate to reach out to us for help.

What If You’ve Done Everything Right?

Sometimes, even after addressing content duplication, URL canonicalization, and robots.txt issues, Google may still not display your page prominently. This could be due to:

- Outdated Content: Refresh your content to make it more engaging and relevant.

- Crawl Priority: Use internal linking to help Google discover the page more easily.

- Perceived AI Content: Ensure your content reads naturally and includes human-like expressions.

If you’ve resolved these issues but still see the same errors, remember that Google might need time to recrawl and re-index your page.

Final Words: Patience and Precision Pay Off

SEO isn’t a one-time task. Instead, it’s a continuous effort. Whether you optimize content, fix technical issues, or improve user experience, the results will follow if you stay consistent. If your page still isn’t performing as expected, feel free to contact us for expert advice.

7 comment

Sabeen Sajid

Thank you so much. I was really stressed about this. We have tried every other page. But your solution have really worked out well. Just came back here to let everyone know.

Dr. Tahira Aleem

We need your help. Can you please help us.

Emily Bronte

I found this blog very informative, keep up the good work. Thank you for the opportunity..

Certified Personal Trainers in Canada

Great post! I am actually getting ready to across this information, is very helpful my friend. Also great blog here with all of the valuable information you have. Keep up the good work you are doing here.

Sakib

' or "1"="1" --

humayoun mussawar

Webnet Pakistan is genuinely making a remarkable impact on the business landscape. With their deep understanding of the local market dynamics and a keen eye for global trends, they are driving businesses to new heights. Their innovative strategies and creative campaigns are not only boosting brand visibility but also driving engagement and conversions.

Akwa

Hi there, I wanted to share an article that looks at the real-life costs of criminalization and how punishment-focused drug laws impact public safety, communities, and everyday people. It explains why pushing personal choices underground often leads to unsafe products, wasted tax dollars, and long-term social harm—while education, regulation, and harm reduction create better outcomes. Your readers may find it valuable for understanding how smarter policies can reduce overdoses, lower public spending, and support healthier, more informed communities. The piece also explores consumer behavior, emotional stigma, and the economic upsides of reform in a clear, relatable way. If you think it aligns with your content, I’d really appreciate you considering it as a resource to reference Thanks so much for your time and consideration—I truly appreciate it. Best, Psychedelic Plug Online